Overview

Apache Kafka has become a cornerstone technology for building real-time data pipelines and streaming applications. At the heart of any Kafka implementation are the client libraries that allow applications to interact with Kafka clusters. This comprehensive guide explores Kafka clients, their configuration, and best practices to ensure optimal performance, reliability, and security.

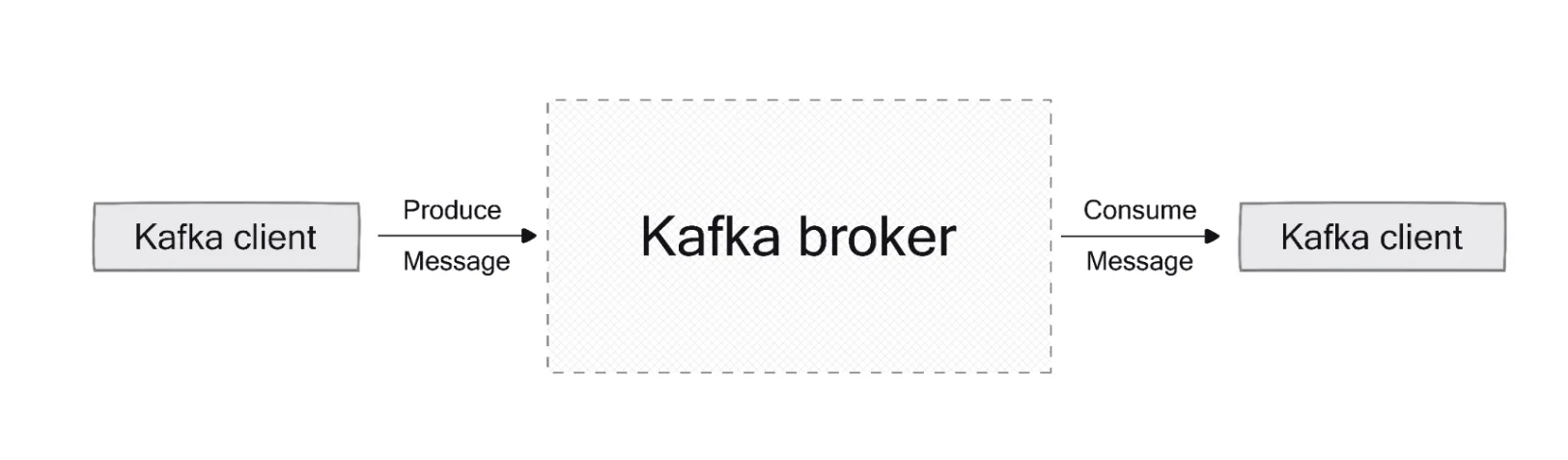

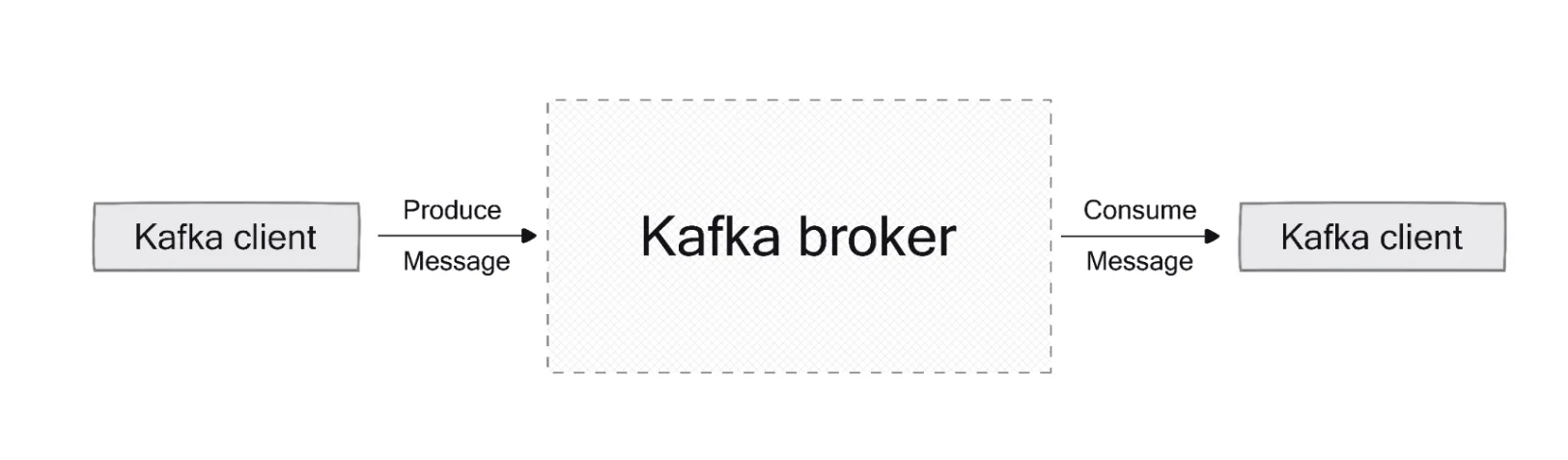

Understanding Kafka Clients

Kafka clients are software libraries that enable applications to communicate with Kafka clusters. They provide the necessary APIs to produce messages to topics and consume messages from topics, forming the foundation for building distributed applications and microservices.

Types of Kafka Clients

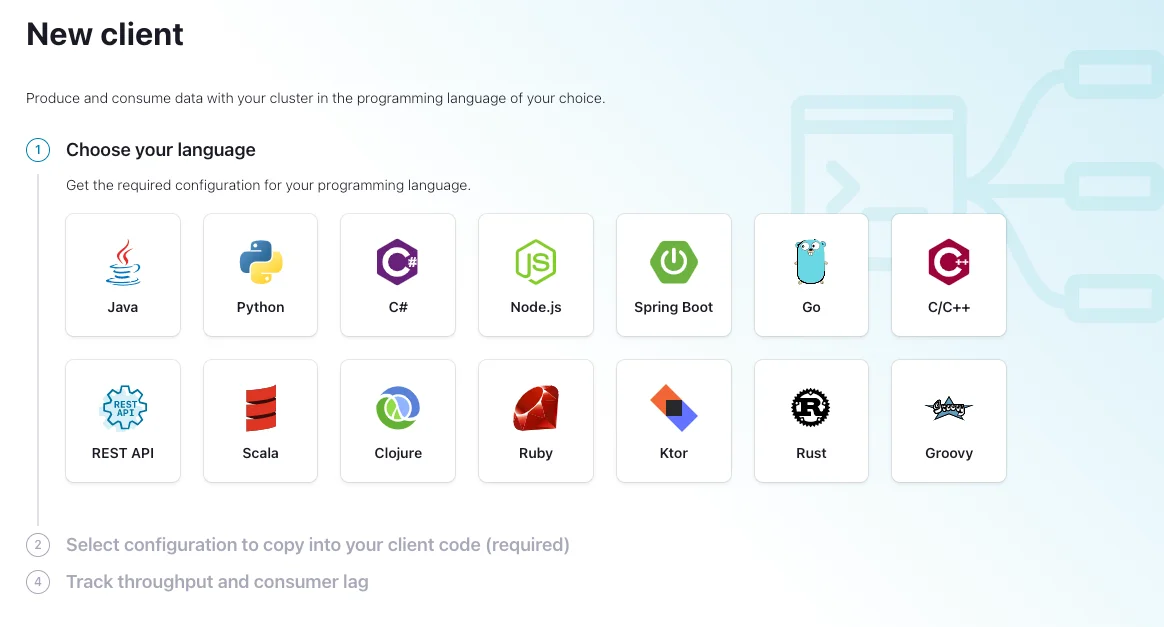

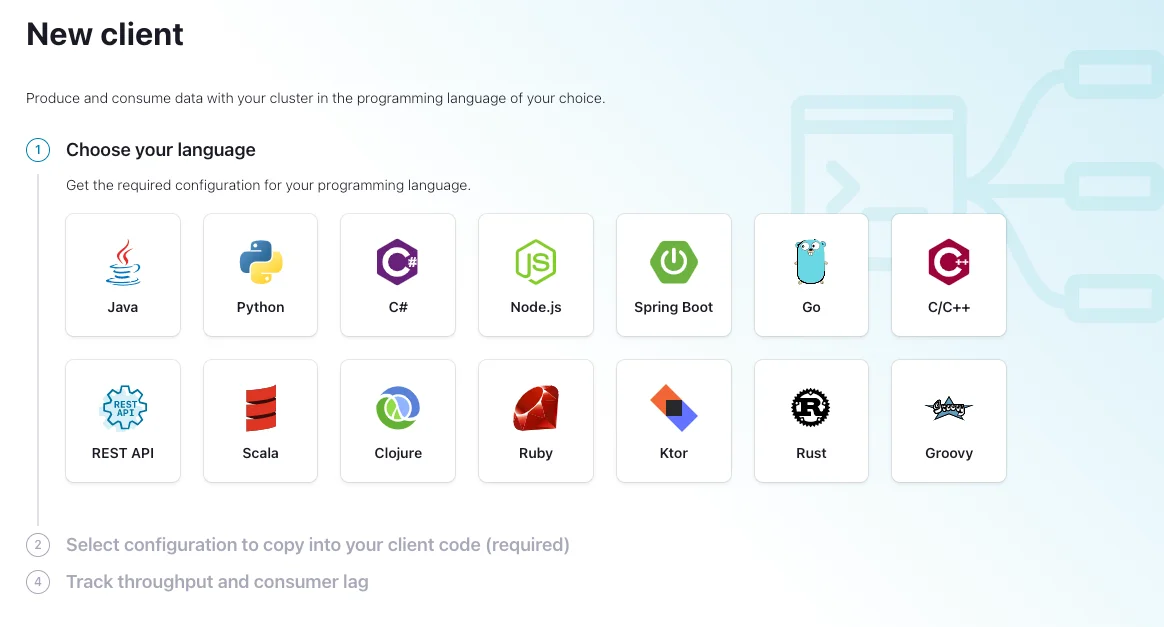

The official Confluent-supported clients include:

-

Java : The original and most feature-complete client, supporting producer, consumer, Streams, and Connect APIs

-

C/C++ : Based on librdkafka, supporting admin, producer, and consumer APIs

-

Python : A Python wrapper around librdkafka

-

Go : A Go implementation built on librdkafka

-

.NET : For .NET applications

-

JavaScript : For Node.js and browser applications

These client libraries follow Confluent's release cycle, ensuring enterprise-level support for organizations using Confluent Platform.

Producer Clients: Concepts and Configuration

Producers are responsible for publishing data to Kafka topics. Their performance and reliability directly impact the entire streaming pipeline.

Key Producer Configurations

Several configuration parameters significantly influence producer behavior:

-

Batch Size and Linger Time

-

batch.size : Controls the number of bytes accumulated before sending

-

linger.ms : Determines how long to wait for more records before sending a batch

-

Acknowledgments

-

acks : Determines the level of delivery guarantees

-

acks=all : Provides strongest guarantee but impacts throughput

-

acks=0 : Offers maximum throughput but no delivery guarantees

-

Retry Mechanism

-

retries : Number of retries before failing

-

retry.backoff.ms : Time between retries

-

delivery.timeout.ms : Upper bound for the total time between sending and acknowledgment

-

Idempotence and Transactions

-

enable.idempotence=true : Prevents duplicate messages when retries occur

-

Transaction APIs: Enable exactly-once semantics

Producer Best Practices

For optimal producer performance, consider these best practices:

-

Throughput Optimization

-

Balance batch size and linger time based on latency requirements

-

Implement compression to reduce data size and improve throughput

-

Use appropriate partitioning strategies for even data distribution

-

Error Handling

-

Implement robust retry mechanisms with exponential backoff

-

Enable idempotence for exactly-once processing semantics

-

Use synchronous commits for critical data and asynchronous for higher throughput

-

Resource Allocation

-

Monitor and adjust memory allocation based on performance metrics

-

Set appropriate buffer sizes based on message volume

Consumer Clients: Concepts and Configuration

Consumers read messages from Kafka topics and process them. Proper consumer configuration ensures efficient data processing and prevents issues like consumer lag.

Key Consumer Configurations

Important consumer configuration parameters include:

-

Group Management

-

group.id : Identifies the consumer group

-

heartbeat.interval.ms : Frequency of heartbeats to the coordinator

-

max.poll.interval.ms : Maximum time between poll calls before rebalancing

-

Offset Management

-

enable.auto.commit : Controls automatic offset commits

-

auto.offset.reset : Determines behavior when no offset is found ("earliest", "latest", "none")

-

max.poll.records : Maximum records returned in a single poll call

-

Performance Settings

-

fetch.min.bytes and fetch.max.wait.ms : Control data fetching behavior

-

max.partition.fetch.bytes : Maximum bytes fetched per partition

Consumer Best Practices

For reliable and efficient consumers, implement these best practices:

-

Partition Management

-

Choose the right number of partitions based on throughput requirements

-

Maintain consumer count consistency relative to partitions

-

Use a replication factor greater than 2 for fault tolerance

-

Offset Commit Strategy

-

Disable auto-commit ( enable.auto.commit=false ) for critical applications

-

Implement manual commit strategies after successful processing

-

Balance commit frequency to minimize reprocessing risk while maintaining performance

-

Error Handling

-

Implement robust error handling for transient errors

-

Have a strategy for handling poison pill messages (messages that consistently fail processing)

-

Configure appropriate retry.backoff.ms values to prevent retry storms

Security Best Practices

Security is paramount when implementing Kafka clients in production environments. Key security considerations include:

-

Authentication

-

Implement SASL (SCRAM, GSSAPI) or mTLS for client authentication

-

Configure SSL/TLS to encrypt data in transit

-

Use environment variables or secure vaults to manage credentials rather than hardcoding them

-

Authorization

-

Implement ACLs (Access Control Lists) to control read/write access to topics

-

Follow the principle of least privilege when assigning permissions

-

Enable zookeeper.set.acl in secure clusters to enforce access controls

-

Secret Management

-

Avoid storing secrets as cleartext in configuration files

-

Consider using Confluent's Secret Protection or the Connect Secret Registry

-

Implement envelope encryption for protecting sensitive configuration values

Performance Tuning and Monitoring

Achieving optimal performance requires careful monitoring and tuning of Kafka clients.

Performance Optimization Strategies

-

JVM Tuning (for Java clients)

-

Allocate sufficient heap space

-

Configure garbage collection appropriately

-

Consider using G1GC for large heaps

-

Network Configuration

-

Optimize socket.send.buffer.bytes and socket.receive.buffer.bytes

-

Adjust connections.max.idle.ms to manage connection lifecycle

-

Configure appropriate timeouts based on network characteristics

-

Compression Settings

-

Enable compression ( compression.type=snappy or gzip ) for better network utilization

-

Balance compression ratio against CPU usage

Monitoring Kafka Clients

Implement comprehensive monitoring for early detection of issues:

-

Key Metrics to Watch

-

Consumer lag: Difference between the latest produced offset and consumed offset

-

Produce/consume throughput: Messages processed per second

-

Request latency: Time taken for requests to complete

-

Error rates: Frequency of different error types

-

Monitoring Tools

-

JMX metrics for Java applications

-

Prometheus and Grafana for visualization

-

Conduktor or other Kafka UI tools for comprehensive cluster monitoring

-

Alerting

-

Set up alerts for critical metrics exceeding thresholds

-

Implement progressive alerting based on severity

-

Ensure alerts include actionable information

Common Issues and Troubleshooting

Even with best practices in place, issues can arise. Here are common problems and their solutions:

-

Broker Not Available

-

Check if brokers are running

-

Verify network connectivity

-

Review firewall settings that might block connections

-

Leader Not Available

-

Ensure broker that went down is restarted

-

Force a leader election if necessary

-

Check for network partitions

-

Offset Out of Range

-

Verify retention policies

-

Reset consumer group offsets to a valid position

-

Adjust auto.offset.reset configuration

-

In-Sync Replica Alerts

-

Address under-replicated partitions promptly

-

Check for resource constraints on brokers

-

Consider adding more brokers or redistributing partitions

-

Slow Production/Consumption

-

Review and adjust batch sizes

-

Check for network saturation

-

Optimize serialization/deserialization

Client Development Best Practices

When developing applications that use Kafka clients, follow these best practices:

-

Version Compatibility

-

Use the latest supported client version for your Kafka cluster

-

Be aware of protocol compatibility between clients and brokers

-

Consider the impact of client upgrades on existing applications

-

Connection Management

-

Implement connection pooling for better resource utilization

-

Handle reconnection logic gracefully

-

Properly close resources when they're no longer needed

-

Error Handling

-

Design for fault tolerance with appropriate retry mechanisms

-

Implement dead letter queues for messages that repeatedly fail processing

-

Log detailed error information for troubleshooting

-

Testing and Validation

-

Implement comprehensive testing of client applications

-

Include failure scenarios in test cases

-

Perform load testing to understand performance characteristics under stress

Web User Interfaces for Kafka Management

Several web UI tools can simplify Kafka cluster management:

-

Conduktor

-

Offers intuitive user interface for managing Kafka

-

Provides monitoring, testing, and management capabilities

-

Features excellent UI/UX design

-

Redpanda Console

-

Lightweight alternative with clean interface

-

Offers topic management and monitoring

-

Provides schema registry integration

-

Apache Kafka Tools

-

Open-source options available

-

May require more setup and configuration

-

Often offer basic functionality for smaller deployments

These tools can complement your client applications by providing visibility into cluster operations and simplifying management tasks.

Check more tools here: Top 12 Free Kafka GUI Tools

Conclusion

Kafka clients form the foundation of any successful Kafka implementation. By understanding their configuration options and following best practices, you can ensure reliable, secure, and high-performance data streaming applications.

Key takeaways include:

-

Select appropriate client libraries based on your programming language and requirements

-

Configure producers and consumers with careful attention to performance, reliability, and security parameters

-

Implement proper error handling and monitoring

-

Follow security best practices to protect data and access

-

Regularly test and validate client applications under various conditions

-

Use management tools to gain visibility and simplify operations

By adhering to these guidelines, you'll be well-positioned to leverage the full potential of Apache Kafka in your data streaming architecture.

Still struggling with skyrocketing Kafka bills and the "ops tax" of manual disk management? It's time to stop babysitting your clusters. Try AutoMQ Cloud for Free and experience how diskless architecture slashes costs and automates scaling—no credit card required. See how others made the switch in our case studies or explore the project on GitHub.